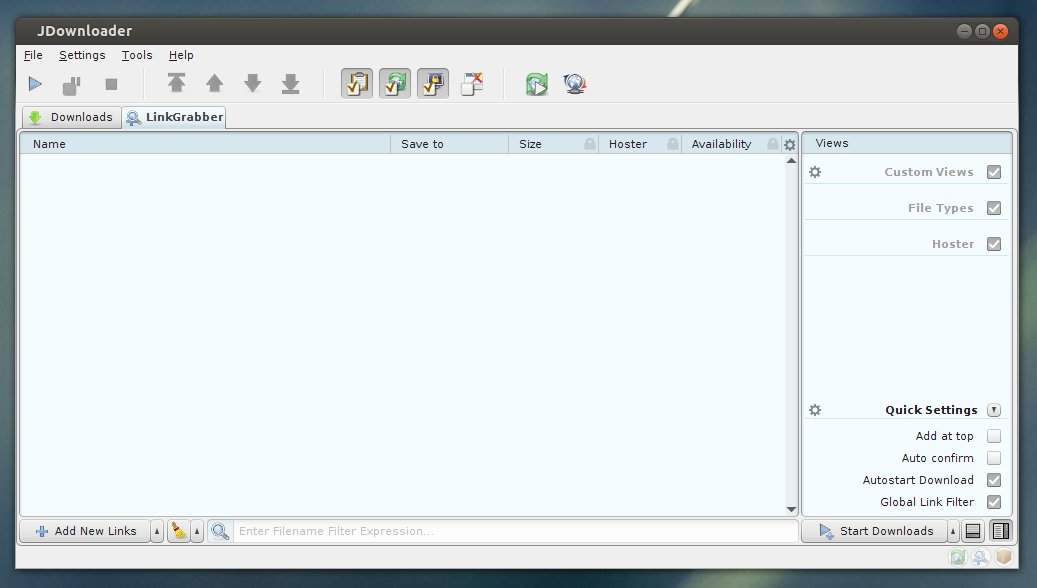

This is a real hassle, because it's almost impossible to select multiple files in a package and then download only those. In the process it deselects anything I've marked before. But I've run into a few issues anyway and hope you can help me.ġ) Once I fill the Linkcollector with packages - import of the *.dlc files works just fine without the need for any extra configuration - the web app keeps updating the information frequently. I've had no problems setting it up, because that part is actually idiot proof. For that purpose I've set up a Samba share to where I'll download all *.dlc files, which are then grabbed by the Folderwatch plugin/extension. crawljob file or see attached files example1.crawljob and example1_'ve long used JD2 happily and successfully, but now that I've set up my own Linux server, I'm trying to run a headless instance of the client. It will be moved to the "added" folder anyways! Please keep in mind that syntax errors in your json will lead to a failure when trying to process your crawljob. The above example is attached to this article as example1_json.crawljob! "downloadFolder": "C:\\Users\\test\\Downloads", The above example is attached to this article as example1.crawljob!įormat 2: json format: You can put multiple crawljobs into one file: crawljob file and moving this file into the folder which JD is watching.įormat 1: Text format. URLs which will contain more (direct-downloadable) URLs inside HTML code.Īdd dummy-offline link if added items were processed by a crawler and this crawler has detected that the URL you were trying to crawl is offline.ĮxtractPasswords= Useful for URLs of websites which are not supported via JD plugin e.g. Should properties set via this crawljob overwrite packagizer rules?ĭeep-analyze URLs added via this crawljob? Use only if text contains a single URL only!Īuto start downloads after this item has been added to the LinkGrabber? Set this if password is required to add this URL e.g. Field nameĮnable/disable items added via this crawljob You may as well leave out fields completely instead of using the value UNSET. UNSET = Existing global setting will be used Here is an overview of all possible crawljob fields: DLC containers or normal URLs! The FolderWatch extension has to be enabled though to make this work! crawljob files to the LinkGrabber just like adding. to add URLs once with custom package name/download path and so on! crawljob files can also be used without FolderWatch to e.g.

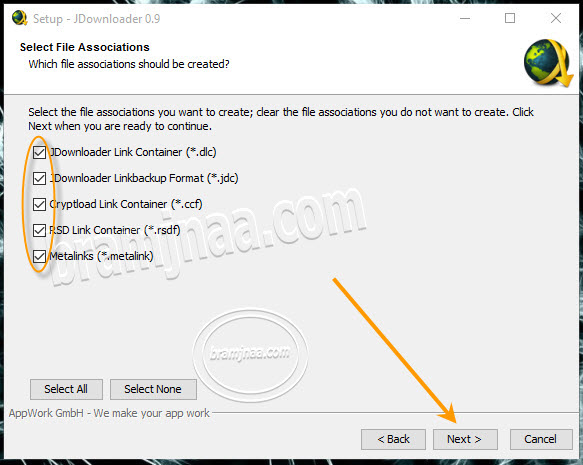

crawljob files, you can tell JD how to process the URLs which will get added whenever it processes said. If you want to use the full potential of Folder Watch, continue reading! DLC container will be moved to "folderwatch/added". DLC container will appear in your LinkGrabber and the. After some seconds, the URLs inside your. DLC file in the above mentioned "folderwatch" default folder.Ĥ. Delete the previously exported URLs in JD and move the created. DLC container via Rightclick -> Other -> Create DLCģ. Open JDownloader and export some added URLs as. To start you can do this simple test using a. Processed files will be moved to a subfolder inside the watched folder called "added", e.g.

running JD on a server and want it to process all URLs added via this method without the need of any further user interaction in JD.Īfter activating the Folder Watch addon, JD is by default monitoring the folder /folderwatch every 1000ms.

Posted by pspzockerscene psp, Last modified by pspzockerscene psp on 08 February 2022 03:20 PMįolder Watch is an addon which can be installed and enabled via Settings -> Extensions (scroll down) -> Folder Watchįolder Watch allows you to let JD monitor one- or multiple folders for special.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed